We have all been there: standing in front of an open closet, staring at a mountain of clothes, yet feeling like you have “nothing to wear.” For many, the solution involves scrolling through social media for inspiration or, more commonly, digging through old photos to remember that one perfect outfit worn at a past event.

Google is addressing this specific pain point with a new artificial intelligence feature that transforms the Google Photos app into a smart, digital wardrobe. By automatically cataloging the clothes you have already owned and worn, the tool aims to simplify styling decisions and reduce the friction of getting dressed.

How the AI Wardrobe Works

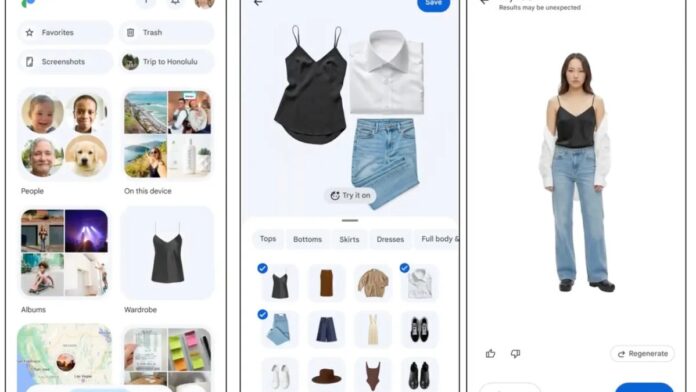

The new feature, which begins rolling out this summer to Android users followed by iOS, leverages AI to scan your existing photo library. It identifies clothing items—such as tops, bottoms, shoes, and jewelry—and organizes them into a searchable digital collection.

This automation solves a common organizational problem: knowing what you own without physically touching it. Users can filter their collection by category to locate specific items quickly, effectively turning their camera roll into an inventory system.

Virtual Styling and Mood Boards

Beyond simple organization, the feature introduces styling capabilities that bridge the gap between inspiration and execution. Drawing inspiration from platforms like Pinterest, users can create digital mood boards by mixing and matching items from their saved collection.

This functionality allows for:

– Scenario-based planning: Create specific boards for occasions like “wedding guest,” “work outfits,” or “casual weekend.”

– Social validation: Share these virtual outfits with friends for feedback before committing to a look.

– Efficiency: Eliminate the need to physically try on multiple combinations to see what works visually.

The “Try-On” Feature: Promise and Limitations

A standout component of the update is the virtual “try-on” preview. Users can select items from their digital closet to see a generated image of how the outfit might look on their body. This technology utilizes AI image generation models to superimpose clothing onto the user’s photo.

However, it is important to understand the current technical boundaries of this feature:

– Fit Approximation: The AI does not understand garment sizing, fabric drape, or cut. The resulting images are rough approximations rather than accurate simulations of fit.

– Ownership vs. Shopping: Unlike last year’s AI try-on feature in Google Search—which focused on items you were actively shopping for—this new tool focuses exclusively on clothing you already own.

Privacy and Data Usage

In an era where AI training data is a frequent concern, Google has clarified its stance on user privacy regarding this feature. The company states that images uploaded for the try-on feature will not be used for AI training, integrated into other Google services, or sold to third parties. This distinction is crucial for users wary of how their personal biometric and lifestyle data might be leveraged.

Conclusion

Google’s new wardrobe feature represents a shift from passive photo storage to active lifestyle utility. By organizing existing assets and offering low-stakes virtual styling, it addresses the daily dilemma of outfit selection. While the virtual try-on remains an approximation rather than a precise tool, the ability to visualize and plan outfits using items you already own offers a practical, privacy-conscious step forward in digital fashion management.